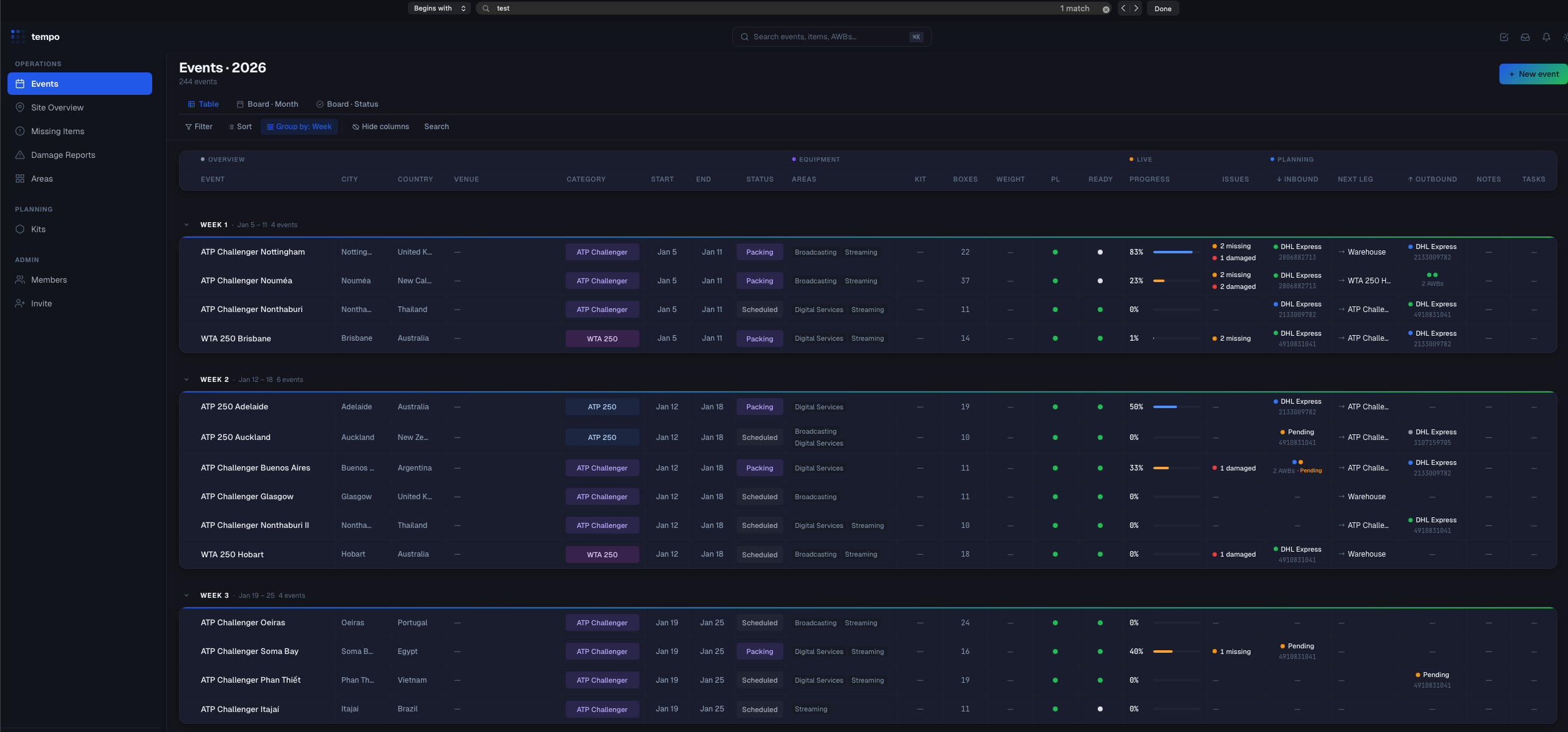

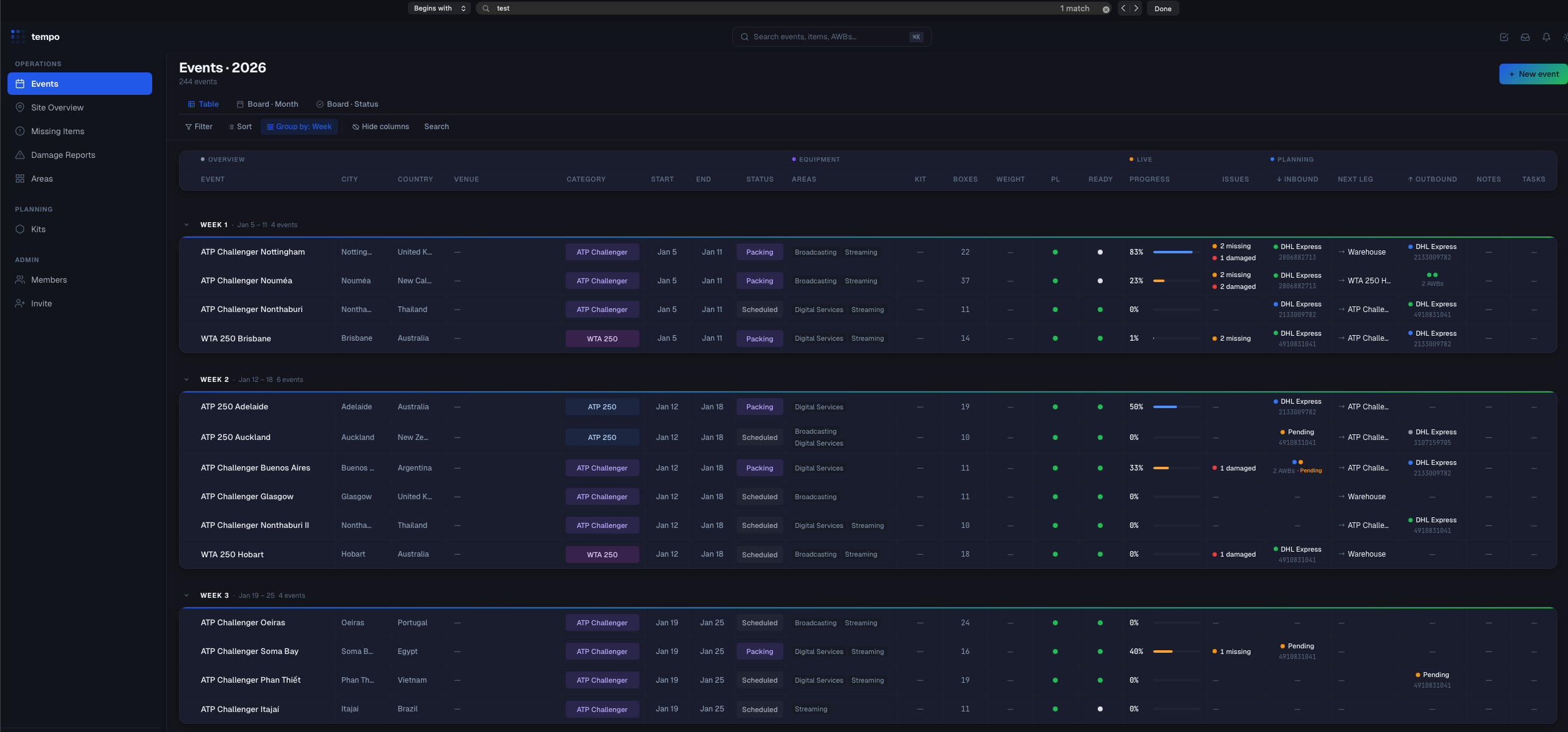

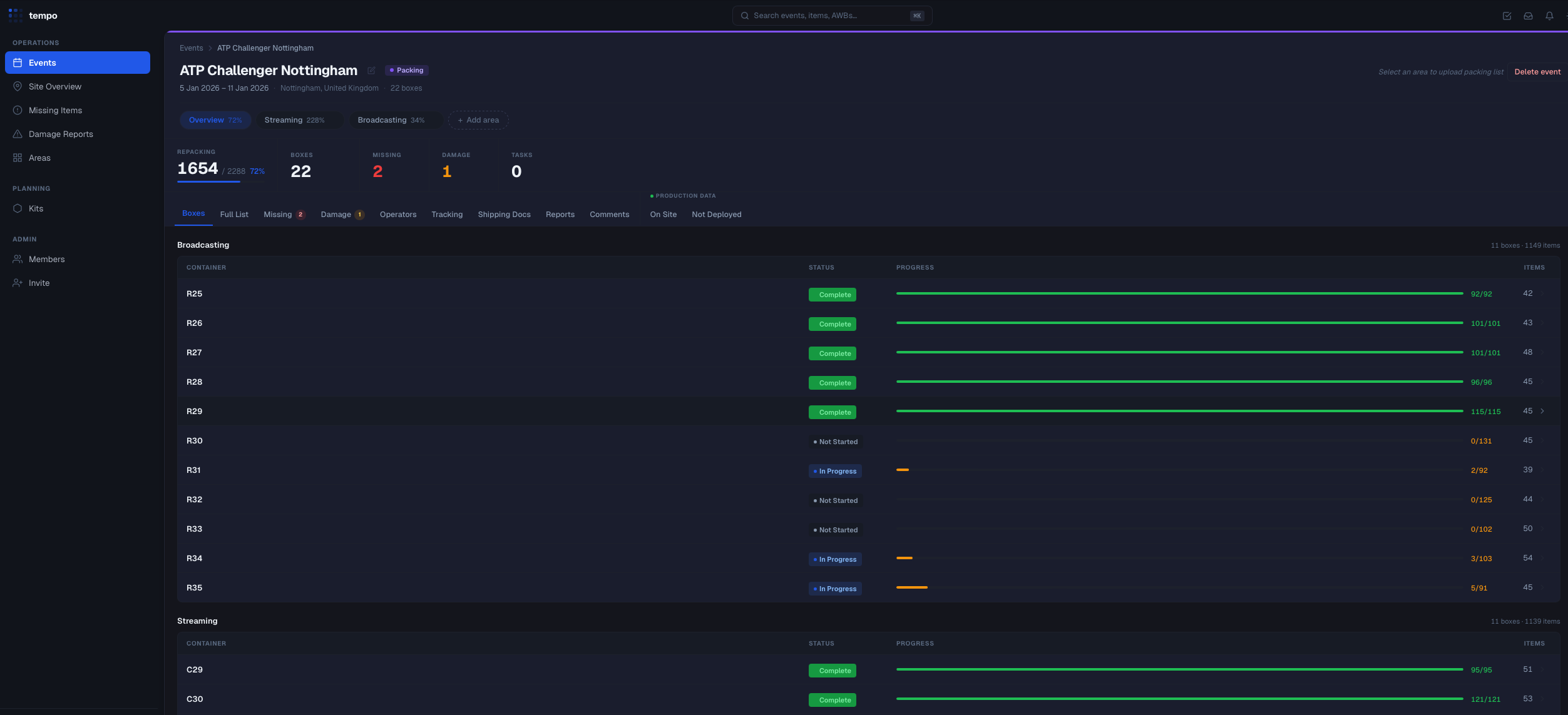

The dashboard

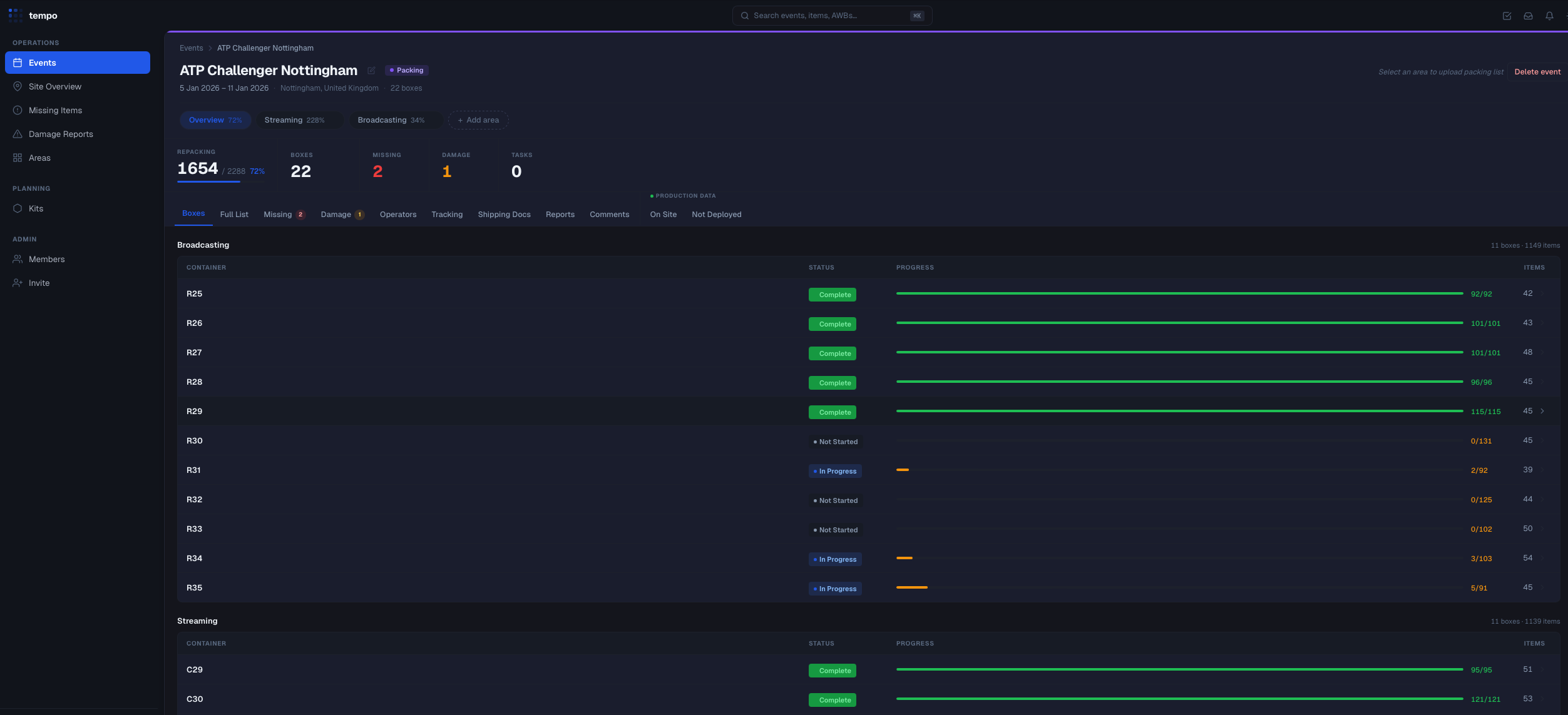

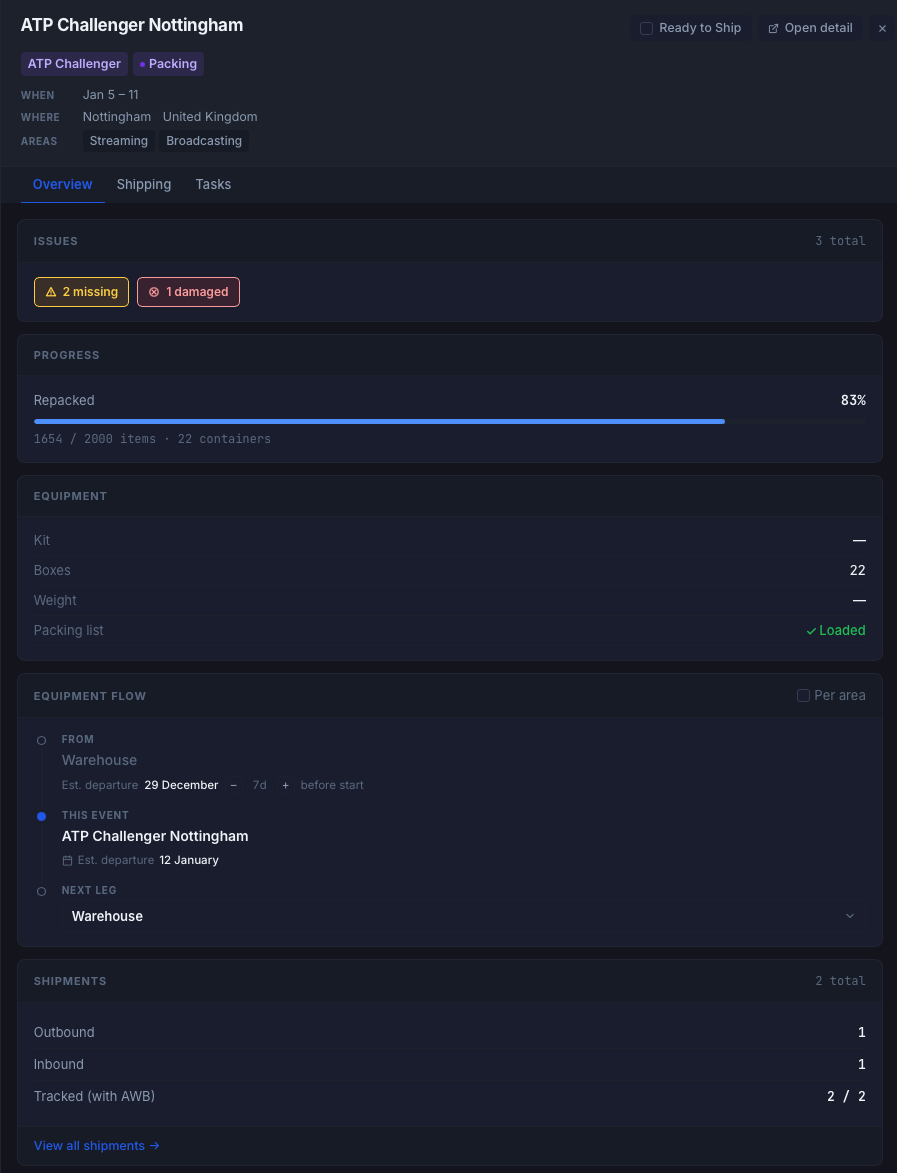

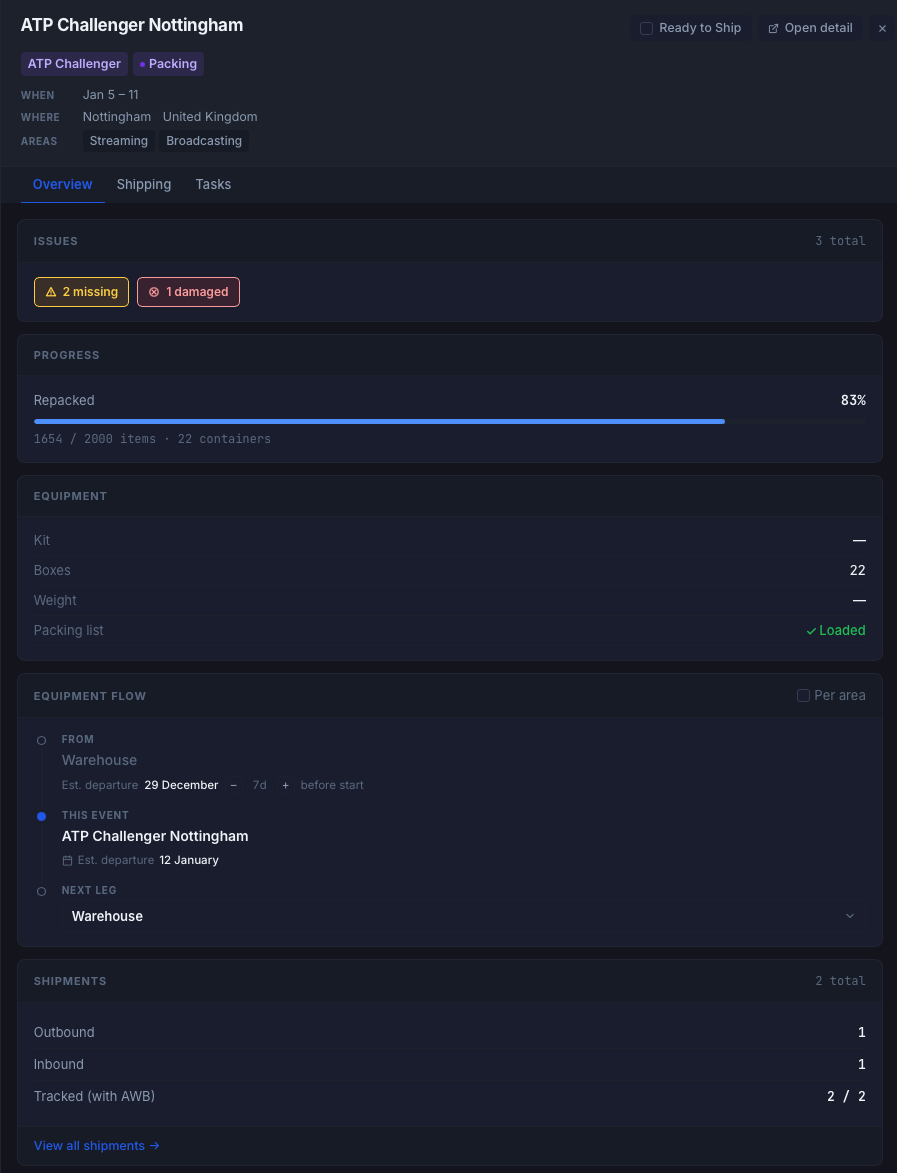

Once things were being logged on site, managers couldn't see what was happening. So a web view got added. Same data, different seat.

A tool I built for on-site equipment tracking at live events. It runs on mobile, works fully offline, and requires no account to use.

Repacking equipment onsite is hard. People are working fast, in poor conditions, off an Excel file or a paper list — trying to find one item under pressure is not a workflow. Things end up in the wrong cases, items go missing, damage doesn't get logged. Most of the time nobody finds out until the next event.

That was the problem I wanted to solve. Over the past months I built a tool called rebox.

CSV, XLSX, or a text-readable PDF. Per-container files and master lists are both detected automatically. No list? Build one from scratch on site by scanning items into containers.

Point the camera at a barcode. The app shows which container the item belongs to. The operator taps to confirm.

Mark an item missing, or log a damage report with a photo. Both are logged in the moment, not at the end of the job.

Assign items to user-defined positions — Main Stage, FOH, Court 1. The hub answers "where is this?" without opening a case.

Packing list, proof of delivery, pro-forma invoice, temporary export declaration. All generated on the device from the live manifest.

Six frames from the iOS app — scroll horizontally. Open the full mockup in a new tab.

Once things were being logged on site, managers couldn't see what was happening. So a web view got added. Same data, different seat.

Operators on site and managers on a laptop see the same state within roughly half a second. When there's no connection, the phone keeps working.

Because every item gets tracked through the whole job, there's a byproduct: a detailed production log covering inventory state, on-site location, and service context. That's not what I built this for — but if anyone ever wants it later, the data is already there.

Things that become possible if someone picks it up:

All of that is later. The tool works today as a repack app, and that's enough.

It's on a private test database right now. I'm offering it with no conditions attached — use it as is, move it to our own infrastructure, or take it apart entirely. No cost, no project, no ask.

I just think the people doing this job deserve a better tool than a printed spreadsheet. Happy to walk anyone through it whenever it makes sense.

| Layer | Tech |

|---|---|

| iOS app | SwiftUI, iOS 16+, Firebase iOS SDK (Auth, Firestore, App Check planned), CoreXLSX, ZIPFoundation. SPM, no CocoaPods. |

| Web dashboard | React 19, TypeScript, Vite, Tailwind 4, Firebase JS SDK, React Router 7. |

| Backend | Cloud Functions for Firebase, TypeScript, Node 22. No owned servers. |

| Data | Firestore (single region). Persistent local cache on both clients. |

| Auth | Firebase Auth. Custom claims distinguish operator vs manager. |

| Shipping | 17track API via a Cloud Function webhook. |

Everything is org-scoped. State indexes are Cloud-Function-maintained denormalizations so summary pages read ~200 docs instead of fanning out across thousands of items.

organisations/{orgID}

├─ events/{eventID} EventDoc (managed from web)

│ └─ areas/{areaID} AreaSessionDoc (one per area per event)

│ ├─ items/{itemID} PackingItem (one row of a packing list)

│ └─ comments/{commentID} per-area notes

├─ missingItems/{itemID} CF-maintained index

├─ damagedItems/{itemID} CF-maintained index

├─ deployedItems/{itemID} CF-maintained index

├─ operators/{uid} OperatorDoc

├─ kits/{id} reusable packing-list templates

└─ areas/{id} org-level area definitionsPackingItem carries three layers of state: inventory (repacked / missing / damaged), location (which on-site position), and service context (which area/zone). Together they form the production log.

Every user action is local-first. UI never waits on a network round-trip.

All stats and state indexes are CF-maintained. Clients read the denormalized indexes; they never fan out over all sessions.

| Function | Type | Purpose |

|---|---|---|

| updateAreaStats | Firestore trigger (item writes) | Keeps area-level counts current. Maintains missingItems / damagedItems / deployedItems atomically in the same batch. |

| updateEventStats | Firestore trigger (area writes) | Sums area stats into the parent event. Skips deletes and imports. |

| onEventCommentWrite | Firestore trigger | Denormalizes comment / unresolved counts onto the event doc. |

| onTaskWrite | Firestore trigger | Denormalizes open / done task counts onto the event doc. |

| onAreaDeleted | Firestore trigger | Cascades cleanup when an area is removed. |

| deleteEventRecursive | Callable (manager-only) | Deletes an event and all subcollections. |

| rebuildStateIndexes | Callable (manager-only) | One-time backfill for recovery. No UI button. |

| inviteOperator / inviteManager | Callable | Creates an Auth account and operator doc, sends a password-reset email. |

| trackingWebhook | HTTP | 17track pushes AWB status updates here. Validated by shared secret. |

One persistent events listener mounted above the router (EventsContext). Per-event area listeners attach when a specific event is opened and detach when it closes. Summary pages — Missing, Damage, Site Overview — read the CF-maintained state indexes directly, never the item docs.

Design tokens live in a single tokens.css; light and dark themes are toggled via data-theme on <html>. A pre-paint inline script reads localStorage to prevent theme flash.

Firestore's persistent cache keeps resume tokens alive between sessions. If the browser reconnects within 30 minutes, a listener resumes from a delta rather than re-reading everything — that's the main lever on read cost.

| Responsibility | Owner |

|---|---|

| Create / delete events, invite users, assign operators | Web only |

| Upload packing lists | Web primarily; iOS also builds lists from scratch on site |

| Operator scans, damage flags, missing flags, deployment | iOS only |

| Shipping-document generation | Both platforms have the same generators |

| Area-stats aggregation | Cloud Function — both platforms observe |

| State index collections | CF-maintained — both read, neither writes |

| AWB tracking | Web only (callable + webhook) |

area.importing = true so updateAreaStats skips stat work during the import. Final stats are written client-side once after the batch.Hosting: pure-logic unit tests with vitest (parsers, status derivation). Fast, no browser.

Cloud Functions: Firebase emulator harness. npm run test:emu builds the CFs, boots the emulator on a fake project, runs vitest against real Firestore triggers, and tears down — ~10 s end-to-end. Zero impact on prod.